From Data Science to Knowledge Science

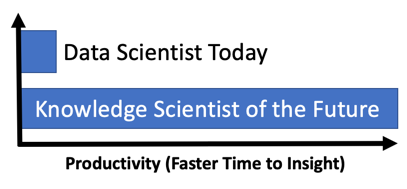

I believe that within five years there will be dramatic growth in a new field called Knowledge Science. Knowledge scientists will be ten times more productive than today’s data scientists because they will be able to make a new set of assumptions about the inputs to their models and they will be able to quickly store their insights in a knowledge graph for others to use. Knowledge scientists will be able to assume their input features:

- have higher quality

- are harmonized for consistency

- are normalized to be within well-defined ranges

- remain highly connected to other relevant data as such as provenance and lineage metadata

Anyone with the title “Data Scientist” can tell you that the job is often not as glamorous as you might think. An article in the New York Times pointed out:

Data scientists…spend from 50 percent to 80 percent of their time mired in this more mundane labor of collecting and preparing unruly digital data, before it can be explored for useful nuggets.

The process of collecting and preparing data for analysis is frequently called “Data Janitorial” work since it is associated with the process of cleaning up data. In many companies, the problem could be mitigated if data scientists shared their data cleanup code. Unfortunately, many companies use different tools to do analysis. Some might use Microsoft Excel, Excel plugins, SAS, R or Python, or any number of other statistical software packages. Just within the Python community, there are thousands of different libraries for statical analysis and machine learning. Deep learning itself, although mostly done in Python, has dozens of libraries to choose from. So with all these options, the chances of two groups sharing code or the results of their data cleanup are pretty small.

There have been some notable efforts to help data scientists find and reuse data artifacts. The emergence of products in the Feature Store space is a good example of attempting to build reusable artifacts for data scientists to become more productive. Both Google and Uber have discussed their efforts to build tools to reuse features and standardize the feature engineering processes. My big concern is that many of these efforts are focused on building flat files of disconnected data. Once the features have been generated they can easily become disconnected from reality. They quickly start to lose their relationships to the real world.

An alternative approach is to build a set of tools for analysts to connect directly to a well-formed enterprise-scale knowledge graph to get a subset of data and transform it quickly to structures that are immediately useful for analysis. The results of this analysis can then be used to immediately enrich a knowledge graph. These pure Machine Learning approaches can complement the rich library of turn-key graph algorithms that are accessible to developers.

What is at stake here is huge gains in productivity. If 80% of your time is currently spent doing janitorial work, the knowledge scientist could become two to four times more productive if they had access to a knowledge graph that has high-quality connected and normalized data. Let’s define what we mean by high-quality, connected, and normalized data. First, let’s talk about data quality within a knowledge graph.

I worked as a Principal Consultant for MarkLogic for several years. For those of you that have not heard about MarkLogic, it is a document store where all data is natively stored in either JSON or XML documents. There are many exceptional features about MarkLogic that promote the productivity of developers doing data analysis. Two of the most important are the document-level data quality score and the concept of implicit query language-level validation of both simple and complex data structures. In MarkLogic, there is a built-in metadata element called the data quality score. The data quality score is usually an integer between 1 and 100 that is set as new data enters the system. A low score, say below 50, indicates that there are quality problems with the document. It might be missing data elements, fields out of acceptable ranges, or corrupt or inconsistent data. A score of 90 could indicate that the document is very good and could be used for many downstream processes. For example, you might set up a rule that you only want documents with a score above 70 to be used in search or analysis.

There are two clever things about how MarkLogic works. One is that the concept of a validation schema is built-in to MarkLogic and the concept of valid data is even built into the W3C document query language: XQuery. Each document can be associated with a root element (within a namespace) and bound to an implicit set of rules about that document. You can use a GUI editor like the oXygen XML Schema editor to allow non-programmers to create and audit the data quality rules. XML Schema validation can generate both a true/false Boolean value as well as a count of the number of errors in the document. Together with tools like Schematron and external data checks, each data steward can determine how they set the data quality score for various documents.

What is also key about document-level data quality scores is that there is a natural fit with business events. Business events can be thought of as complete transactions that happen in your business workflows. Examples of Business Events include things like an inbound call to your call center, a new customer purchase, a subscription renewal, enrollment in a new healthcare plan, a new claim is filed, or an offer is set as part of a promotional campaign. All of these events can be captured as complete documents, stored in streaming systems like Kafka, and loaded directly into your knowledge graph. You only need to subscribe to a Kafka topic ingest the business event data into your knowledge graph. The data quality scores can also be included in both the business event documents your knowledge graph. These scores should then be used whenever you are doing data analysis.

We should contrast complete and consistent business event documents with another format of data exchanges: the full dumps of table-by-table data from a relational database into a set of CSV files. These files are full of numeric codes that may not have a clear meaning. This is the type of data many data scientists find in a Data Lake today. Tuning this low-level data back into meaningful connected knowledge is something that slows the time to insight for many organizations.

Storing flattened CSV-level data and numeric codes are where feature stores fall down. Once the features are extracted and stored in your data lake or object store they become disconnected from how they were created. A new process might run on the knowledge graph that raises or lowers the score associated with a data item. However, that feature can’t easily be updated to reflect the new score. Feature scores can add latency that will prevent new data quality scores from reflecting the current reality.

One of the things I learned at MarkLogic is that document modeling and data quality scoring did not come easily to developers that have been using tables to store data. Relational data architects tend not to think of the value document models and the concept of associating a data quality score with business event documents. I like to refer to business event document modeling as “on-the-wire” thinking. On-the-wire thinking should be contrasted to “in-the-can” thinking — where data architects stress over how to minimize joins to keep query times within required service levels.

There are two final points before we wrap up. First is that quality in a graph is different than quality in a document. This topic has been well studied by my colleagues at the world-wide-web consortium and documented in the RDF-based SHApe Constraint Language (SHACL). In summary, the connectedness of a vertex in a graph will also determine quality. This connectedness not expressed in a document schema. The world-wide-web also has spent considerable time building PROV: a data model that describes provenance. Eventually, I hope that knowledge graphs products each have a robust metadata layer that has complete information about how data was collected and transformed as it journeyed from a source system into the knowledge graph for analysis.

The final point is to emphasize that the labeled property graph (LPG) ecosystem really does not have mature standards as RDF has. RDF had a 10 year lead over LPG and this was enough for the semantic web stack to mature and blossom. In the LPG space, we don’t really have mature machine-learning integration tools yet to enable knowledge science. If you are an entrepreneur looking to start a new company this is a greenfield without much competition! Let me know if I can help you get started!

I am indebted to my friend and UHC colleague, Steven J. Peterson for his patient evangelism of the importance of on-the-wire business event document modeling. Steve is a wonderful teacher, a consistent preacher of good design, a hands-on practitioner, and an insightful scholar. I cherish both his friendship and his willingness to share his knowledge.